Executive Summary

Building an AI portfolio that delivers enterprise impact requires an AI project prioritization methodology that is significantly more rigorous than most organizations expect. Key insights include:

- Most organizations lack a consistent, structured methodology for AI project prioritization. What passes for prioritization is often a negotiation shaped by advocacy, organizational influence, and whoever has the most momentum at the time.

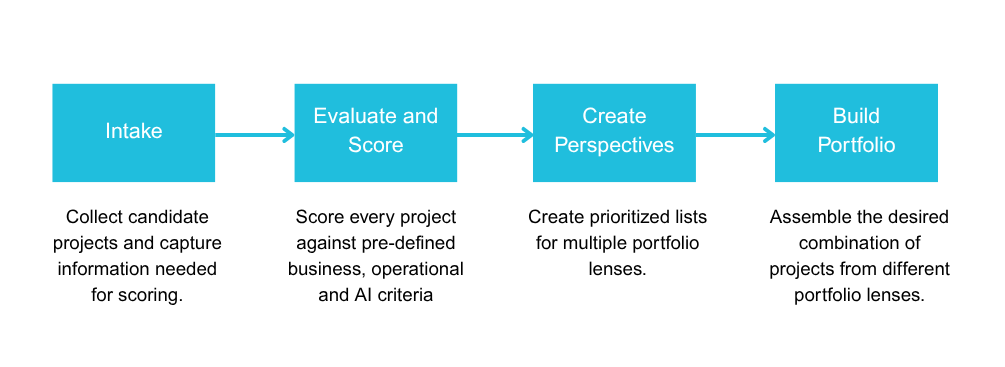

- Effective AI project prioritization follows three key steps: a structured collection process that surfaces all viable candidates and captures the information needed to evaluate each one fairly; a scoring process that converts a subjective, bias-prone evaluation into something numerical and more objective; and a ranking process that produces multiple prioritized views across different portfolio perspectives.

- Applying this methodology effectively requires both AI fluency and deep business and operational fluency. Neither alone is sufficient.

Introduction

For most organizations, AI project prioritization looks something like this. A handful of department heads enter a room, each carrying a list of initiatives they believe will transform their part of the business. Finance wants a forecasting model. Operations wants process automation. Marketing wants personalization at scale. HR mentions an attrition prediction tool. What follows is less a strategic discussion and more a negotiation, shaped by whoever argues most persuasively, holds the most organizational influence, or simply got on the agenda first. A project gets greenlit. Another gets shelved. The logic evaporates the moment the meeting ends. Next quarter, the conversation starts over.

This is not a technology problem. It is an AI project prioritization problem, and one of the most consequential gaps in how enterprises approach building an AI portfolio today.

In Part 1 of this series, we detailed the cost of getting this wrong. Organizations that manage AI through negotiation and advocacy rather than structured prioritization actively destroy value through resource cannibalization, blocking effects, and missed synergies. The organizations closing that gap are the ones that have replaced negotiation with methodology.

This piece explains what that methodology looks like, why each part of it is harder than it appears, and what separates organizations that treat AI project prioritization as a discipline from those that treat it as a meeting.

The Difference Between a Ranked List and an AI Portfolio

Before getting into how prioritization works, it is worth being precise about what it is meant to produce, because most organizations are aiming at the wrong target.

A ranked list is what you get when you score a set of projects and sort them from highest to lowest. It is useful. It is better than pure advocacy. But it is not an AI portfolio, and it will not produce portfolio-level returns.

The difference is the same as the difference between knowing which stocks look attractive today and knowing how to construct a mutual fund that performs across market conditions, manages risk deliberately, captures synergies between holdings, and compounds value over time. Both require judgment. Only one requires a methodology sophisticated enough to see the full picture.

A ranked list tells you which projects score highest in isolation. Building an AI portfolio tells you which combination of projects creates the most value together. That distinction is what AI project prioritization is actually trying to solve for, and it shapes every part of the methodology that follows.

What Effective AI Project Prioritization Actually Requires

Effective AI project prioritization starts with a structured intake process that levels the playing field. In most organizations, the projects that make it onto the evaluation list are the ones with internal champions. High-potential ideas without a vocal sponsor never surface at all. An effective intake process actively solicits candidates across every relevant part of the business and captures a consistent set of inputs for each one, covering the problem it addresses, the value it is expected to deliver, the data it depends on, and any known risks or constraints. Without that foundation, no scoring process can evaluate projects on equal footing.

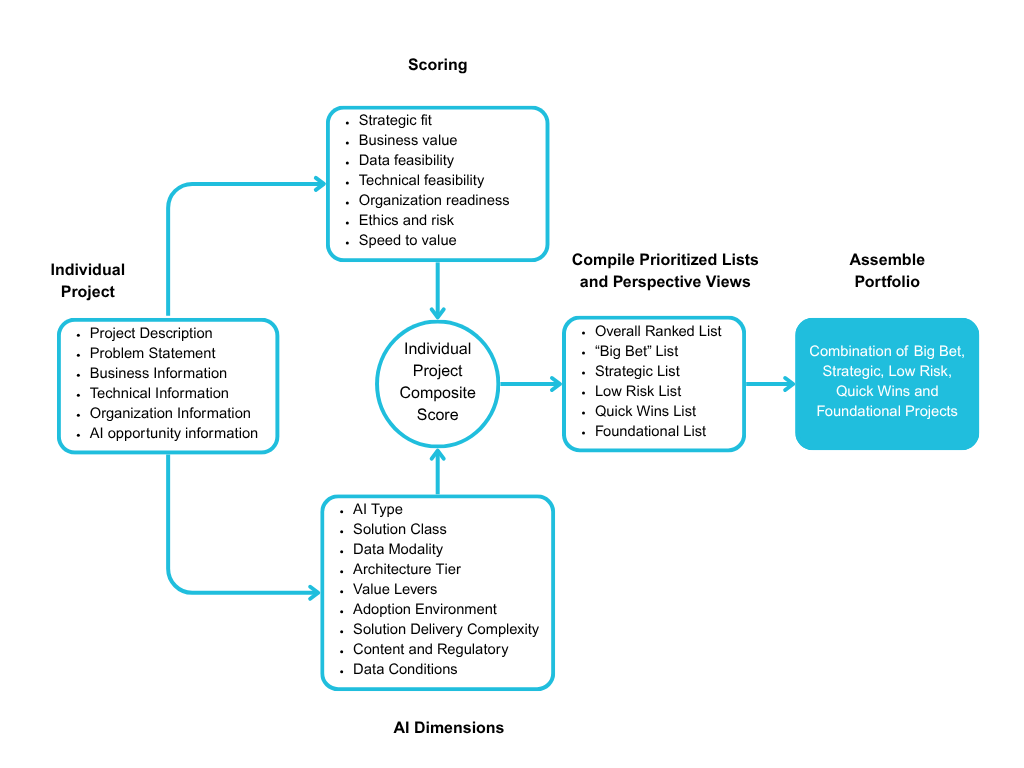

It also requires evaluation criteria that go beyond the business case. A project with a compelling return on investment that depends on data infrastructure that does not yet exist is not ready to execute. A technically feasible project that the organization lacks the change management capacity to adopt will stall in deployment. Effective AI project prioritization requires criteria that capture the full picture, including strategic fit, business value, data readiness, technical feasibility, organizational readiness, ethics and risk, and speed to value.

Even with structured criteria, scoring is vulnerable to bias. A text summarization tool and a multi-step autonomous agent workflow are both AI projects, but they differ enormously in data requirements, architectural complexity, risk, and organizational capability needed to sustain them. A scoring process that does not account for those differences will consistently produce scores that are misleading. The solution is an approach that converts what is normally subjective and bias-prone into something numerical and more objective, with systematic adjustments that correct for what human judgment reliably gets wrong.

Consider two projects that appear similar on paper. Both involve generative AI, both have strong business cases, and both have enthusiastic sponsors. But one is a document summarization tool operating on clean internal text data with no regulatory constraints, and the other is a contract analysis system processing sensitive legal documents in a regulated environment requiring a complex multi-stage retrieval architecture. Evaluated purely on business value they might score similarly. Evaluated across the full set of criteria and adjusted for what each project actually requires to build, deploy, and sustain, they look very different, with direct implications for sequencing, resourcing, and risk management.

Finally, effective AI project prioritization produces multiple prioritized lists rather than one, each evaluated through a different lens, making visible what a single ranking cannot: sequencing dependencies, resource conflicts, and cross-project synergies that only appear when you look at the initiative landscape as a whole.

A Framework for Building an AI Portfolio: How We Approach It at Strategy of Things

The principles above describe what effective AI project prioritization requires. At Strategy of Things, we have developed the SoT AI Portfolio Prioritization Framework, a four-step process that operationalizes these principles in a structured, repeatable, and defensible way.

Step 1: Collect

Our intake process actively solicits candidates from across the entire organization rather than waiting for sponsors to surface them. It also captures a consistent set of structured inputs for every candidate, covering the problem statement, value drivers, data context, organizational readiness signals, and known risks and constraints. The output is a comprehensive, unfiltered inventory with each candidate documented well enough to be scored consistently alongside the rest.

Step 2: Evaluate and Score

We evaluate every candidate against seven weighted criteria: strategic fit, business value, data feasibility, technical feasibility, organizational readiness, ethics and risk, and speed to value. Human evaluators assign scores using a consistent rubric. Those scores are then run through a structured algorithmic model that applies systematic adjustments based on nine AI dimensions that characterize the true nature of each project, including AI type, solution class, data modality, architecture tier, data conditions, adoption environment, solution delivery complexity, and content and regulatory sensitivity. The result is a composite score that reflects both potential value and genuine executability, comparable fairly across the entire project landscape.

The criteria weights are calibrated to each organization’s strategic priorities and risk tolerance. An organization under competitive pressure to move quickly may weight speed to value more heavily. A heavily regulated organization may weight ethics and risk as a higher priority. That calibration conversation is itself valuable, because it forces explicit alignment on what the organization actually believes matters most, rather than leaving those priorities implicit and contested.

Step 3: Create Perspective Views

The composite scores feed into multiple ranked views, each representing a different portfolio lens: quick wins, strategic investments, big bets, and foundational priorities. Together these views surface sequencing dependencies, resource conflicts, and cross-project synergies that a single ranked list cannot show.

Step 4: Assemble Portfolio

From the ranked views, leadership assembles the final AI portfolio by making deliberate choices about the mix. One organization might pursue one big bet, two strategic investments, several quick wins, and one foundational project. Another might construct a very different combination based on its maturity and competitive context. The methodology does not prescribe the answer. It gives leadership a structured, evidence-based foundation to make that choice deliberately and defend it clearly. The decisions still belong to the people in the room.

The process is repeatable. The same AI project prioritization methodology applied each quarter produces a consistent, comparable view of the organization’s AI pipeline, one that leadership can present to the board not as a list of technology initiatives, but as a managed strategic portfolio with a clear and defensible rationale.

Operational Considerations for AI Project Prioritization

Operationalizing an AI project prioritization methodology requires more than a good process. It requires clear ownership, the right capabilities, and organizational reach. Ownership typically sits in or near the CAIO function, with a designated person or team accountable for running and maintaining the process. But AI portfolio prioritization is not an AI team activity done in isolation. It is inherently cross-functional. Collection, scoring, and assembly require input from business units, operations, finance, legal, and technology, among others. Depending on how an organization structures its AI governance function, portfolio prioritization can sit within or alongside it, giving it the authority and visibility it needs to be taken seriously and sustained over time.

The capability requirements are also significant. Running this process well requires a rare combination of AI fluency, business and operational context, and strategic perspective. That combination is genuinely hard to find in one place inside most organizations. And the process itself is continuous, not a one-time exercise. The full AI project prioritization cycle runs on a regular cadence, typically quarterly. But intake and collection are ongoing activities that happen anytime a new project idea surfaces, meaning the organization needs a mechanism for capturing and processing new candidates between formal prioritization cycles.

Is Your Organization Ready to Build an AI Portfolio?

Organizations that have made the shift from negotiation-based prioritization to a structured AI portfolio methodology consistently report the same changes. Projects that stall in deployment become less common because readiness gaps are identified before commitment rather than after. Resource conflicts surface before they cause damage rather than after budget and time have been lost. And the portfolio becomes something leadership can actively manage and defend rather than something that simply accumulated over time. The compounding effect is real, but it only becomes visible once the methodology is in place.

Consider these questions honestly:

- Do you have a consistent, evidence-based AI project prioritization methodology, or does prioritization still depend on who argues most persuasively?

- Does your intake process actively surface project candidates from across the organization, or do only the initiatives with vocal sponsors make it onto the list?

- Do you have the data you need to assess each project’s true organizational readiness, including data infrastructure maturity, technical capability, and change management capacity?

- Does your current AI project prioritization process account for the full complexity of each initiative, including its regulatory exposure, architectural demands, and adoption environment, and can you see clearly which projects are competing for the same constrained resources?

- Is your current AI portfolio something you could present to your board as a structured capital allocation decision with a clear and defensible rationale?

- Do you have both the AI fluency and the business and operational fluency needed to apply a rigorous AI project prioritization methodology consistently, quarter after quarter, as your project landscape and business priorities evolve?

The questions above are not hypothetical. Every one of them reflects a gap that shows up consistently in organizations that are active in AI but not yet seeing enterprise-level returns. If most of them are difficult to answer, or if the answers vary depending on who in your organization you ask, the AI project prioritization process is likely a significant part of why AI investment is not compounding the way it should. Strategy of Things works with mid-market organizations to design practical AI strategies and guide portfolio prioritization through our Fractional Chief AI Officer services. The cost of continuing with the current approach is real, and it compounds quietly until it isn’t. If the questions above surfaced gaps you recognize, that is worth a conversation before the next round of investment decisions is made.

Part 1 of this series discusses the importance of managing AI as a deliberately constructed portfolio rather than a collection of one-off projects in order to drive enterprise impact. This article is part of a continuing series aimed at providing senior leaders and managers with a practical working knowledge of artificial intelligence and how to manage it as a business capability.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

How the Right AI Model Translates Into Decisions, Strategy, and Results.

The AI Build–Buy–Partner Decision: A Strategic Framework for Executives

AI Is Everywhere. Enterprise Impact Isn’t. Here’s the Structure That Closes the Gap.

Your AI Projects Are Competing Against Each Other. You Just Can’t See It.