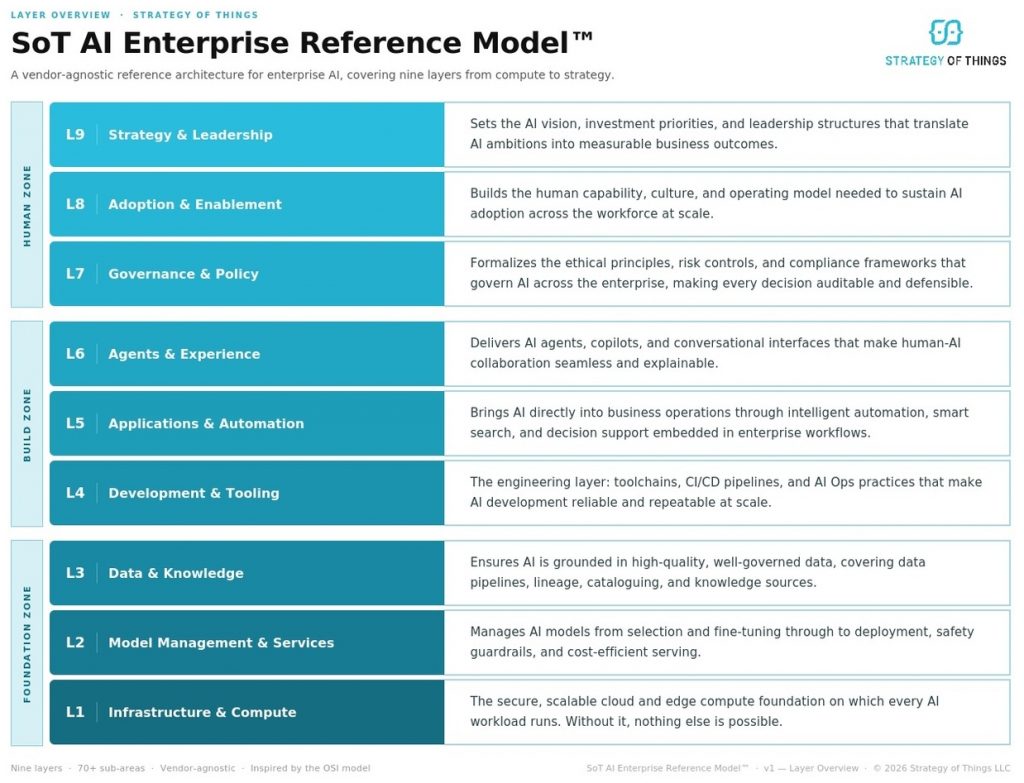

Introducing the SoT AI Enterprise Reference Model: an enterprise AI framework covering nine layers from infrastructure to strategy.

The core argument: If you read nothing else

Most AI initiatives fail not because the technology does not work, but because the organizational structure needed to turn AI activity into business impact is missing. No clear ownership. No principled way to prioritize investments. No governance that makes AI trustworthy at scale. No operating model that makes adoption stick.

This happens because most organizations treat AI as a technical capability. We treat it as a business capability. The distinction matters: business capabilities require both technical and organizational conditions to be met. Neither is optional. Neither delivers value without the other.

The SoT AI Enterprise Reference Model is a nine-layer framework inspired by the OSI model that has governed enterprise networking for 40 years. It maps every distinct capability cluster an organization needs to build and sustain AI at enterprise scale. From infrastructure through strategy. Technical and organizational dimensions treated as one inseparable system.

What this means for you: if your AI program is generating activity but not enterprise-wide impact, the gap is almost certainly in one or more of the nine layers. Knowing which layer is where the work begins.

Most AI programs are not failing because of the technology. They are failing because of what surrounds it.

The numbers should concern anyone who has signed an AI budget in the last two years.

According to McKinsey’s 2025 State of AI report, 88 percent of organizations now use AI in at least one business function. Yet only 31 percent have scaled it enterprise-wide. PwC’s 2026 Global CEO Survey found that 56 percent of chief executives reported neither higher revenues nor lower costs from their AI investments. Forrester estimates that only 10 to 15 percent of AI projects ever reach sustained production use.

Think about that for a moment. The majority of organizations are running AI somewhere. Most of them cannot show for it on the bottom line.

AI does not fail because the technology does not work. It fails because the structure needed to turn AI initiatives into enterprise impact is missing.

For a COO, this is not an abstract concern. AI embedded in a process without the right foundation beneath it does not just underperform. It creates operational risk that is harder to unwind than the original process it replaced. A workflow that now depends on a model that was never properly governed, grounded in clean data, or adopted by the people running it is not a productivity gain. It is a fragile dependency dressed up as progress.

What is missing, almost universally across industries and organization sizes, is the operating structure that connects AI activity to business outcomes. The strategy that connects investment to impact. The governance that makes AI trustworthy at scale. The data foundation that makes models reliable. The culture that makes adoption stick.

Most organizations approach AI the way you might renovate a house one room at a time, without ever looking at the floor plan. Individual projects get funded. Individual tools get deployed. Individual teams get excited. But without a systems view, the efforts remain disconnected, the wins stay local, and the enterprise-wide impact never materializes.

At Strategy of Things, we built our entire practice around a single conviction: enterprise AI requires a systems model, not a series of point solutions. Everything we do, from how we assess clients to how we prioritize use cases to how we make build-buy-partner decisions, flows from that model. This post introduces it.

AI is not a technical capability. It is a business capability. That distinction changes everything.

Most organizations approach AI as a technology problem. They hire data scientists, procure tools, stand up infrastructure, and measure progress in models deployed and APIs integrated. The technology layer gets serious investment and serious attention.

The business layers, including governance, adoption, strategy, culture, and operating model, are treated as secondary. Something to address once the technology is working. A change management program bolted on after the architecture is built. A governance policy written because the legal team asked for one.

This sequencing is the root cause of most AI program failures. Not because the technology did not work. Because the organizational conditions required to turn that technology into business value were never built.

Technology capability and business capability are not two phases of an AI program. They are two inseparable dimensions of a single system.

Consider what it actually takes for AI to deliver value in an enterprise. A model needs to exist and perform, and that is technology. But it also needs to be grounded in trustworthy data, which is data governance and organizational discipline. It needs to be embedded in a workflow that people actually use, which is process design and change management. It needs to operate within ethical and regulatory boundaries, which is governance and policy. And it needs to be connected to a business outcome that someone is accountable for, which is strategy and leadership.

Remove any one of those conditions and the model does not deliver value. Not less value. No sustained, enterprise-wide value. The chain is only as strong as its weakest link, and the weakest link is almost always not the technology.

This is why we built a framework that treats technical and organizational capabilities as a single, inseparable system. Not because it makes for a more comprehensive slide. Because it reflects the reality of how AI value is actually created and sustained. A framework that covers only the technology layers is incomplete by design. A framework that covers only strategy and governance without the technical layers is ungrounded. Both are wrong in the same way: they treat as separable something that is not.

The SoT AI Enterprise Reference Model was built from this conviction. Every layer is load-bearing. None is optional. The goal is not to be comprehensive for its own sake. It is to map exactly the conditions that must be present for AI to deliver business value at enterprise scale, and nothing more.

We built this on the same design principle that has governed enterprise networking for 40 years.

In 1984, the International Organization for Standardization published the OSI model, the Open Systems Interconnection reference model for how data should move across a network. At the time, the networking world was a fragmented mess. Every vendor had its own proprietary protocols. Systems from different manufacturers could not communicate. The industry was racing ahead without a shared architecture.

The OSI model solved this with a deceptively simple idea: layered abstraction. Divide the problem into distinct layers, each with a clear responsibility. Lower layers enable upper layers. No layer needs to know how another layer does its job. The entire stack works regardless of which vendor’s hardware or software you use.

Four decades later, the OSI model remains the foundational reference architecture for every network engineer in the world. Not because it was mandated by regulation. Because it was right.

When we set out to build a reference model for enterprise AI, we asked ourselves what made OSI endure. The answer was its design philosophy: layered abstraction, clear responsibilities, enabling dependencies, and vendor neutrality. Those principles do not belong only to networking. They belong to any complex system that requires multiple interdependent capabilities working together reliably.

Enterprise AI is exactly that kind of system.

So we applied the same design principle to AI. Instead of describing how data moves across a network, our model describes how AI value is created and sustained across an organization. Like OSI, it is vendor-agnostic and works regardless of which AI tools, cloud platforms, or foundation models you use. Unlike OSI, it extends beyond technology to encompass people, governance, and strategy in a single coherent structure, because those dimensions cannot be separated from the technical ones without breaking the system.

The result is the SoT AI Enterprise Reference Model™.

Nine layers. Every capability your organization needs to build and sustain AI at enterprise scale. Nothing left out.

The model organizes the full enterprise AI capability stack into nine layers. Before walking through each one, it is worth explaining the logic that produced them, because understanding why the layers exist the way they do is what makes the model useful rather than just memorable.

Each layer represents a distinct cluster of capabilities, technologies, practices, and approaches that share enough in common to be treated as a coherent group. What makes a cluster distinct is that it requires specialized skills, tools, and expertise that are meaningfully different from those of every other layer. Where the nature of the work changes fundamentally, where different people, different tools, and different approaches are needed, that is where one-layer ends and the next begins.

The layers also have a dependency structure. Lower layers are not less important than upper layers. They are more foundational. An organization with a sophisticated AI strategy and weak data infrastructure is not an organization with a partial AI capability. It is an organization whose strategy has no foundation to execute on. Strength at Layer 9 does not compensate for weakness at Layer 3. The layers are not a menu. They are a system.

Finally, the layers span technology and business by design. Not because we wanted to be comprehensive, but because the conditions required for AI to deliver enterprise value genuinely span both. The technology layers alone are necessary but not sufficient. The business layers alone are directional but not executable. Together, they describe the complete system.

What each layer addresses, and what weakness looks like

Here is what each layer is actually solving for, and the patterns we see when that layer is underdeveloped. These are not hypothetical failure modes. They are the consistent findings across enterprise AI engagements.

L1: Infrastructure & Compute

AI workloads are computationally intensive, unpredictable in scale, and require specialized hardware including GPUs, high-throughput storage, and low-latency networking that standard enterprise IT infrastructure was not designed to provide. This layer addresses the question of whether the organization can run AI workloads reliably, securely, and cost-efficiently at the scale the business requires.

When this layer is weak: Models that cannot scale beyond a pilot. Compute costs that spiral without visibility. Security incidents from ungoverned access to AI systems. AI workloads that are slow, unreliable, or unavailable precisely when the business needs them most.

L2: Model Management & Services

AI models are not static software. They degrade over time as the world changes, they drift as input distributions shift, and they require ongoing evaluation, fine-tuning, and lifecycle governance to remain safe, accurate, and cost-efficient. This layer addresses the question of whether the organization knows if its models are working correctly today and tomorrow.

When this layer is weak: Models deployed and forgotten, with no visibility into performance degradation. No process for evaluating new models against existing ones. Runaway inference costs from inefficient serving. Safety incidents from models operating outside their intended boundaries with no guardrails to catch it.

L3: Data & Knowledge

AI systems are only as good as the data and knowledge they are grounded in. This layer addresses the question of whether the organization’s data is of sufficient quality, with sufficient lineage and governance, to be trusted as the foundation for AI outputs. It is the layer where the claims AI makes are either traceable back to reliable sources or they are not. This is the layer most consistently underestimated, and the one whose weakness most reliably destroys value at every layer above it.

When this layer is weak: Models that produce plausible but wrong answers with no way to explain why. Inability to audit AI outputs for regulators, auditors, or customers. RAG systems that confidently retrieve stale or inconsistent information. Data that exists in abundance but cannot be used because its provenance cannot be established.

L4: Development & Tooling

Building AI systems reliably at enterprise scale requires engineering discipline that goes beyond standard software development. The experimental nature of AI, including the need to track experiments, manage prompt versions, test model behavior, and monitor production performance continuously, requires toolchains and practices specifically designed for AI’s unique characteristics. This layer addresses the question of whether the organization can build, test, deploy, and operate AI systems with the repeatability and rigor that enterprise use demands.

When this layer is weak: Every team builds differently, with no shared standards and no repeatability. Models that work in development and fail in production. No visibility into what was deployed, when, and by whom. Inability to roll back safely when something goes wrong. AI Ops treated as an afterthought until a production incident forces the conversation.

L5: Applications & Automation

AI capability that exists in infrastructure but is not embedded in workflows does not create business value. This is the layer where AI crosses from technical capability into operational impact, where automation replaces manual steps, where cognitive search surfaces the right information at the right moment, and where decision support puts evidence in front of the person making the call. This layer addresses the question of whether AI is actually changing how work gets done and whether those changes are measurable.

When this layer is weak: AI tools that exist but are not used. Productivity gains that stay local to one team and never compound across the organization. Use cases that are technically working but operationally marginal. Inability to point to a specific operational metric such as cost, throughput, error rate, or cycle time that AI has visibly moved.

L6: Agents & Experience

As AI moves from embedded automation to active collaboration, including agents that take actions, copilots that assist in real time, and multi-agent systems that orchestrate complex workflows, the design of how humans and AI interact becomes critical to both value and safety. This layer addresses the question of whether the organization can build and operate AI experiences that people trust, that maintain appropriate human oversight, and that are accessible to the people who need them rather than only to those with technical expertise.

When this layer is weak: Agents that take consequential actions without appropriate human oversight checkpoints. Copilots that users distrust because they cannot explain their reasoning. Interfaces that require AI literacy to operate, limiting adoption to a technical minority. Conversational capabilities that work in demos and fail in daily operational use.

L7: Governance & Policy

AI operating at enterprise scale creates ethical, regulatory, and operational risks that require formal governance structures to manage. This layer addresses the question of whether the organization can deploy AI responsibly, explain its decisions to those affected by them, demonstrate compliance to regulators, and hold someone accountable when things go wrong. Governance is not confined to this layer: data governance operates at L3, model safety at L2, prompt governance at L4, agent oversight at L6. L7 is where those threads are formalized, unified, and made auditable as an organizational discipline.

When this layer is weak: AI decisions that cannot be explained or challenged. Regulatory exposure from AI operating in sensitive processes without documented controls. No clear accountability when an AI system produces harmful or incorrect outcomes. Governance frameworks that exist on paper but are not operationalized in the systems and workflows they are meant to govern.

L8: Adoption & Enablement

Technology adoption in organizations requires deliberate investment in human capability, cultural conditions, and operating model redesign. AI is particularly demanding in this respect because it does not just add a new tool to existing workflows. It changes how work is organized, what skills are valued, and how decisions get made. This layer addresses the question of whether the organization is building the human and cultural conditions for AI to become a sustained, broadly used capability rather than a novelty adopted by a technically capable few.

When this layer is weak: Low utilization of AI tools despite significant investment. Workforce resistance rooted in fear, uncertainty, or lack of relevant skills. AI capability concentrated in a small number of individuals, creating fragility and limiting scale. AI initiatives that succeed technically and fail organizationally, where the tool works and the people do not change.

L9: Strategy & Leadership

Without executive direction, AI investments accumulate opportunistically rather than strategically, reflecting whoever lobbied hardest rather than what the business most needs. This layer addresses the question of whether the organization has a clear AI vision, a principled basis for investment decisions, measurable outcomes that leadership is accountable for, and the governance structure to ensure AI serves the enterprise’s goals at scale. It is the layer that gives all other layers their direction.

When this layer is weak: An AI portfolio that reflects internal politics rather than strategic logic. No defensible investment thesis to present to the board. AI strategy that shifts with every leadership conversation, preventing the accumulated capability required for enterprise impact. Individual layers performing well in isolation with no coherent system connecting them to outcomes the business cares about.

Each of the nine layers contains eight sub-areas, 72 in total, each scored on a maturity scale from zero to five. The depth is there for those who need it. The architecture is designed so the big picture is visible at a glance.

This is, to our knowledge, the first framework to unify technical architecture, operational capability, and leadership accountability in a single measurable structure. Most AI frameworks address one of these dimensions. The SoT AI Enterprise Reference Model™ addresses all three, because in our experience, weakness in any one of them is sufficient to stall an AI program.

The three zones tell every stakeholder in your organization exactly where they fit and what depends on them.

With the logic of the nine layers established, the three zones become self-evident. They are not an organizational convenience. They are the natural groupings that emerge when you look at which layers share the same category of work, the same primary stakeholders, and the same type of organizational challenge.

Zone: Foundation (L1 to L3). Infrastructure, models, and data.

These three layers share a common character: they are the technical substrate that everything above depends on. The work here is primarily engineering and data discipline. The question the Foundation Zone answers is: can we run AI reliably, at scale, on trustworthy information? If the answer is no, every layer above is built on sand.

Zone: Build (L4 to L6). Development, applications, and agents.

These three layers share a common character: they are where AI becomes visible to the business. The work here spans engineering, product design, and workflow integration. The question the Build Zone answers is: can we build, deploy, and operate AI systems that deliver value in real workflows? This is where most pilots happen and where most organizations get stuck when the layers beneath them are not solid.

Zone: Human (L7 to L9). Governance, adoption, and strategy.

These three layers share a common character: they are where AI becomes a sustained enterprise capability rather than a collection of technical assets. The work here is leadership, organizational design, change management, and governance. The question the Human Zone answers is: do we have the human and organizational conditions to make AI work at enterprise scale and keep it working? Without the Human Zone, every success in the Foundation and Build Zones remains fragile.

If you are an operational or executive leader, read the zones in reverse. Start with the Human Zone: do you have the strategy, governance, and organizational capability to sustain AI as a business function? Then the Build Zone: are AI capabilities embedded in workflows where they generate real, measurable operational value? Then the Foundation Zone: is the technical foundation underneath those workflows stable and trustworthy enough to depend on? That is the order in which outcomes depend on capability, even if the architecture is built from the bottom up.

A CTO lives in the Foundation Zone. A Chief Digital Officer lives in the Build Zone. A COO, CEO, and board live in the Human Zone. The model gives every stakeholder a clear entry point, while making visible how their layer connects to everyone else’s.

Now that you understand the model, Part 2 shows how it drives every decision we make.

This post has introduced the SoT AI Enterprise Reference Model™, the logic behind its nine layers, and the design philosophy that produced it. What it has not yet addressed is what the model actually does in practice: how it informs build-buy-partner decisions, how it drives use case prioritization, how it shapes an AI strategy document, and how it gives organizations a structured way to assess where they are and what to do next.

That is the subject of Part 2.

This article is part of a continuing series aimed at providing senior leaders and managers with a practical working knowledge of artificial intelligence and how to manage it as a business capability.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

How the Right AI Model Translates Into Decisions, Strategy, and Results.

The AI Build–Buy–Partner Decision: A Strategic Framework for Executives

AI Is Everywhere. Enterprise Impact Isn’t. Here’s the Structure That Closes the Gap.