Why AI Projects Fail to Deliver: It’s Not a Technology Problem

If you are responsible for AI in your organization, whether as a Chief AI Officer, a CEO or COO with AI on your agenda, or a senior leader who has been handed accountability for making AI work, this pattern will feel familiar. AI projects are running across departments. Vendors are engaged. Leadership has made the announcements. In many cases, individual initiatives have shown genuine promise. Specific workflows are faster. Certain decisions are better supported. Teams report real productivity improvements at the project level. And yet enterprise impact remains elusive.

And yet, when you step back and look at the business as a whole, the picture is less clear. Revenue hasn’t moved materially. Margins haven’t improved in ways the board or the ownership group can point to. Competitive position looks roughly the same. The AI activity is real. The business impact is not.

The instinct is to look at the technology. A different model, a better vendor, a more sophisticated use case. But in most organizations, the technology is not the problem. Something structural is missing. And until that structural gap is addressed, the pattern repeats: more pilots, more activity, limited impact.

The Data Confirms What Many Leaders Are Already Experiencing

This is not an isolated organizational challenge. The research from major advisory and consulting firms tells a consistent story, and it is sobering regardless of the size of your organization.

McKinsey’s 2025 State of AI report, drawing on responses from nearly 2,000 participants across 105 countries, found that 88% of organizations now use AI in at least one business function. But nearly two-thirds have not yet begun scaling AI across the enterprise. Only 31% report scaling AI enterprise-wide. And only 39% of organizations report any measurable effect on enterprise-level EBIT from AI. Of those, the vast majority say AI accounts for less than 5% of EBIT. Just 5.5% of organizations, roughly 1 in 18, report that AI is driving significant enterprise value. [1]

PwC’s 2026 Global CEO Survey of 4,454 chief executives across 95 countries reported that 56% of respondents saw neither higher revenues nor lower costs from AI. [2] Research firm Forrester estimates that only 10–15% of AI projects reach sustained production use and that over 60% of pilots fail to scale beyond controlled environments. [3] The RAND Corporation’s research found that more than 80% of AI projects fail, roughly twice the failure rate of conventional IT projects. [4]

These findings span organizations of all sizes, from large enterprises with dedicated AI teams and significant budgets to mid-market companies working with far more constrained resources. The structural gap does not discriminate by company size. If anything, its consequences are felt more acutely in organizations where every dollar of AI investment has to earn its return and where there is no deep reservoir of capital to fund a do-over.

Pilot ROI and Business Impact Are Not the Same Thing

At this point, a reasonable objection arises. “We are getting impact,” leaders will say. “Our pilot saved 20 hours a week. Our use case improved customer response times. The productivity gains are real.”

That is true, and it is important to acknowledge it. Pilot-level results are real results. But pilot-level ROI and enterprise-level impact are fundamentally different measurements. Conflating the two is one of the most common and costly mistakes organizations make in their AI programs.

A pilot operates in a controlled environment. Data is often curated manually. Integrations are built as one-off connections. Workflows that appear automated may still rely on human review. These shortcuts are appropriate for validating a concept quickly, but they are not a miniature version of the operating environment.

Moving from pilot success to operational reality requires an entirely different set of investments: system integration, process redesign, change management, workforce retraining, infrastructure upgrades, governance frameworks, and compliance review. Each of those has a cost. The time saving or productivity gain demonstrated in the pilot has to survive that cost and complexity before it shows up in business performance. In many cases it does not, not because the AI failed, but because the integration and operationalization work was never properly funded, planned, or coordinated.

For mid-market organizations, this gap is particularly consequential. A large enterprise can absorb a stalled AI initiative. It is expensive and frustrating, but survivable. A mid-market company operating with limited IT resources, a lean leadership team, and a constrained budget does not have that buffer. When an AI investment fails to make it from pilot to production, or delivers local productivity gains that never reach the bottom line, the cost is felt directly. There is no war chest to fund a retry. There is no large team to absorb the lessons and quickly try again. Getting it right the first time is not a preference. It is a business necessity.

McKinsey’s research underscores the integration challenge. Of all the organizational factors linked to AI success, fundamental workflow redesign ranks highest in its correlation with measurable business impact. Yet only 21% of organizations using AI have redesigned at least some workflows. The vast majority, nearly 80%, are layering AI on top of existing processes without rethinking how work actually flows. [1] The pilot impact stays local. It never reaches the business.

Now Multiply That Across Every Initiative You’re Running

Scaling one pilot into an operational capability is hard. The integration work is significant, the change management is real, and the path from proof of concept to production is longer and more expensive than most organizations plan for.

But most organizations are not managing one pilot. They are managing several, running simultaneously, across different departments, with different owners, different vendors, different timelines, and competing demands on the same limited pool of IT resources, integration budget, and leadership attention. For a large enterprise, that might mean twenty or thirty initiatives. For a mid-market company, it might be five or eight. The number is less important than the coordination challenge it creates.

At that scale, the challenge shifts entirely. It is no longer a technical challenge or even a project management challenge. It becomes a coordination, prioritization, and governance challenge. Consider what happens without a formal structure to manage it:

- Multiple initiatives compete for the same limited budget and IT resources, with no principled way to decide which ones move forward

- Teams in different departments procure overlapping tools and build duplicative infrastructure, because no one has visibility across the portfolio

- Ownership becomes fragmented. When a pilot is ready to scale, no one has clear authority or budget to take it from experiment to operation

- Risk accountability becomes unclear. When an AI system generates a bad recommendation in a customer-facing workflow, who is responsible?

- Each integration project goes through the same expensive, slow process independently, with no shared infrastructure, standards, or lessons learned

The result is familiar to anyone who has led AI at the organizational level. Lots of activity. Some local wins. And a persistent, frustrating inability to connect any of it to meaningful business performance.

Some organizations recognize this and attempt to address it informally, with a steering committee, a shared Slack channel, or a quarterly AI review meeting. These efforts reflect the right instinct, but they rarely hold. Without a formal operating structure backed by defined authority, clear accountability, and executive commitment, coordination defaults back to whoever has the loudest voice or the most budget. The structural problem reasserts itself.

A Different Vantage Point Changes Everything

Here is the core problem. Most organizations are evaluating AI initiative by initiative, pilot by pilot, use case by use case. Each team is optimizing for its own local outcome: did the pilot work? Did it save time? Did the model perform? These are legitimate questions, but they are the wrong unit of analysis if the goal is business impact.

Business impact requires a different vantage point, one that looks at AI not as a collection of individual projects but as a business capability. A capability that has to be directed, invested in, governed, integrated, and scaled in a way that produces measurable outcomes at the level of the whole organization.

From that vantage point, the questions change entirely. Not just “did this pilot deliver ROI?” but: Does this initiative align with where the business is going? Can it survive integration into our operations? Does it compete with three other initiatives for the same IT resources? Who owns it when it moves from experiment to operation? How do we measure its contribution to business performance, beyond team-level productivity? And critically for mid-market organizations: is this the right place to be spending our limited AI budget, given everything else we could be pursuing?

This is the vantage point that is missing in most organizations. And it is the vantage point that the AI Operating Function is designed to provide.

What the AI Operating Model Actually Needs: The AI Operating Function

The AI Operating Function is the leadership and management structure that allows AI initiatives to be directed, prioritized, governed, and scaled as a coordinated business capability rather than a collection of independent experiments. You may have seen this concept described elsewhere as an AI operating model. We define it more precisely, because the challenge is not just designing a model on paper. It is building the active leadership and management discipline that makes the model work in practice.

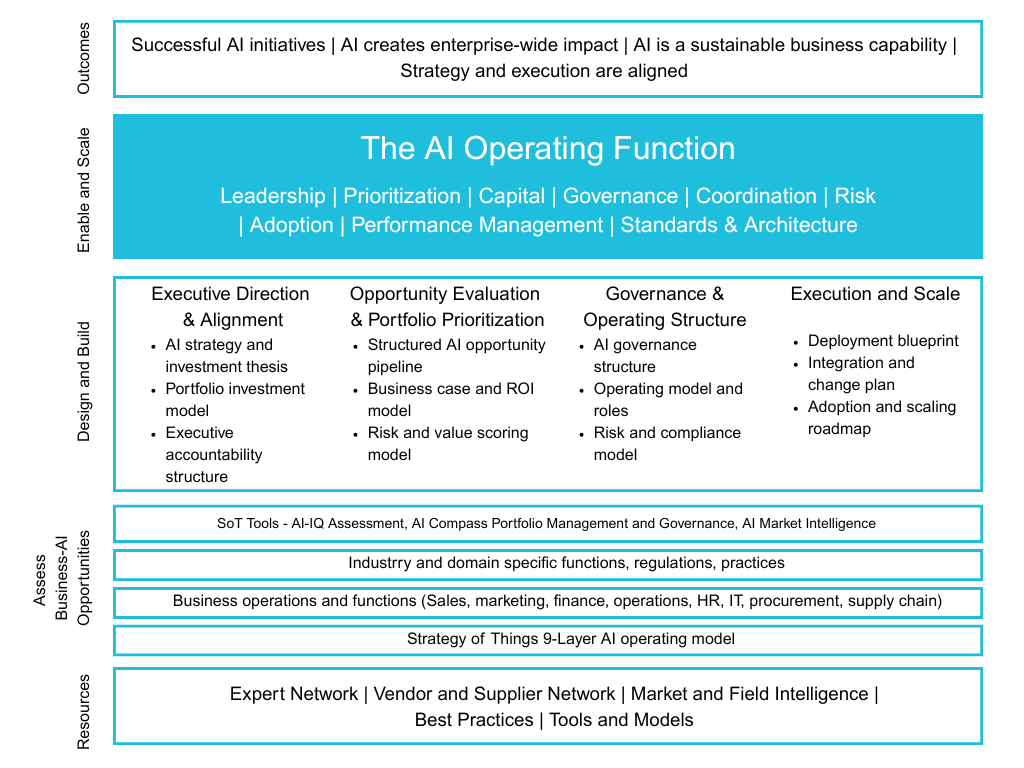

Figure 1. The AI Operating Function: Leadership | Prioritization | Capital | Governance | Coordination | Risk | Adoption | Performance Management | Standards & Architecture

It is worth being clear about what it is not. It is not a tool or software platform. It is not a single job title. It is not a center of excellence sitting at the side of the organization reviewing what everyone else is doing. It is an operating structure, the management discipline that makes AI work at organizational scale.

Consider how most organizations manage their finance function. Finance is not a spreadsheet. It is not a collection of departmental budget owners doing independent accounting. It is a coordinated business capability with a defined strategy, governance structures, investment frameworks, risk oversight, and performance management mechanisms. Every department touches finance, but the finance function ensures that all of that activity is coordinated, governed, and aligned with the organization’s performance goals.

AI requires the same treatment. The AI Operating Function provides the equivalent management infrastructure for AI, ensuring that all of the AI activity happening across the organization is directed, prioritized, governed, and measured as a coordinated business capability.

Importantly, this structure is not one-size-fits-all. An early-stage mid-market company running a handful of AI initiatives does not need the same depth of structure as a global enterprise managing dozens. But some version of this structure is necessary at every scale, because the absence of it creates the same problems regardless of organization size. The right design reflects where your organization is today: its AI maturity, the complexity of its operations, the constraints on its resources, and the strategic priorities that matter most right now.

McKinsey’s research on AI high performers is instructive. Nearly half of respondents in high-performing organizations report that senior leaders show clear ownership and long-term commitment to AI, actively sponsoring initiatives, protecting AI budgets, and role-modelling usage, compared with only about 16% in lagging organizations. High performers follow a full-stack management approach spanning strategy, talent, operating model, technology, data, and adoption. They did not get better results by finding better models. They got better results by building better management infrastructure around AI. [1]

How the Function Works: The Four Pillars

The AI Operating Function is built around four coordinated areas of leadership that together govern how AI is directed, evaluated, governed, and scaled across the organization. Each pillar addresses a specific and recurring failure point. Together, they close the gap between AI activity and AI impact.

Setting the Direction

AI must begin with clear executive direction. This means defining the organization’s AI ambition, aligning AI investments with business priorities, and establishing the accountability structures that guide how AI decisions are made. Without this, AI investments reflect whatever individual departments happen to be excited about rather than where the business needs to go. For mid-market organizations, this pillar also addresses a resource allocation question that is impossible to avoid: given what we can actually spend and who we actually have, where should AI be playing in our business?

Making the Right Bets

Most organizations generate more AI ideas than they can responsibly pursue. A structured opportunity evaluation and portfolio prioritization process ensures that leadership focuses resources on the initiatives with the greatest potential impact, sequenced in a way that is disciplined, defensible, and aligned with measurable outcomes. Business cases and ROI models are developed before investments are committed, not after a pilot has already been built. This is especially critical for mid-market organizations, where funding a low-priority initiative at the expense of a high-impact one is not just an efficiency problem. It is a strategic mistake with real consequences.

Governing What Gets Built

AI initiatives span multiple parts of the organization including data, infrastructure, operations, risk, compliance, and workforce adoption. Without a governance and operating structure that coordinates these moving parts, decision rights become unclear, accountability becomes fragmented, and risk exposure increases. The governance pillar defines who owns what, establishes risk oversight and compliance structures, coordinates cross-functional dependencies, and creates the reporting mechanisms that allow leadership to see what is happening across the portfolio. For organizations without a dedicated AI team, this pillar also clarifies who is responsible for AI decisions when there is no obvious single owner.

Making It Real

AI creates value only when it moves beyond pilots and becomes part of real business operations. The execution and scale pillar addresses integration into operational workflows, alignment with existing systems, workforce adoption, and the change management required for AI to deliver sustained outcomes rather than isolated proofs of concept. This is where the gap between pilot ROI and business impact is finally closed, or where it is left open by default. For mid-market organizations, this pillar also means making deliberate decisions about what to build internally, what to buy, and where to partner, because trying to do everything with limited resources is one of the fastest ways to stall an AI program.

A Note on the Mid-Market Reality

Mid-market organizations face a version of this challenge that is in some ways harder than what large enterprises deal with. The structural gap is the same, but the resources available to close it are fundamentally different.

Most mid-market companies do not have a dedicated AI team, a Chief AI Officer, or an enterprise architecture group that can absorb coordination informally. The IT function is often stretched thin managing existing systems. The leadership team is already wearing multiple hats. And the capital available for AI investment is limited, which means every dollar has to work and there is no budget for experiments that do not produce returns.

At the same time, the competitive pressure to move on AI is real and growing. Mid-market companies are not just competing against peers of similar size. They are competing against larger organizations that have more resources to invest in AI, and against newer, more agile competitors that are building AI-native operations from the ground up. Falling behind on AI is not an abstract concern. It is a concrete competitive risk that compounds over time.

This creates a real tension. Mid-market organizations need the AI Operating Function more urgently than their larger counterparts in some respects, because they have less margin for error, but they are also less able to build and staff a full internal AI leadership function. The good news is that they do not need to. The AI Operating Function scales to the size and maturity of the organization. A mid-market company in the early stages of its AI journey needs a lighter, more focused version of this structure, one that establishes clear prioritization, basic governance, and executive alignment without requiring a large team or a large budget to operate.

This is precisely why many mid-market organizations are turning to fractional Chief AI Officer leadership, to access enterprise-grade AI management capability without the cost and overhead of building a permanent executive AI function. The goal is to get the structure right from the beginning, so that every dollar invested in AI is working toward a return and the organization is not later having to undo misaligned investments at significant cost.

How to Know If You’re Missing It

The absence of an AI Operating Function is often not immediately obvious. Try answering these questions honestly.

- When multiple AI initiatives compete for budget, how does your organization decide which ones move forward and in what order? Is that decision made systematically, or by whoever argues most convincingly?

- Who ultimately owns AI decisions across the organization, not within a department, but across the business as a whole?

- When a pilot shows promise, who is responsible for scaling it into an operational capability? Do they have the authority, resources, and budget to actually do it?

- Before a new initiative launches, does your organization confirm that the data, systems, and teams required to support it are actually ready?

- When AI-related risks arise, whether compliance, bias, security, or operational failure, who is accountable? Is that clearly understood before something goes wrong?

- Can you clearly connect your current AI investments to measurable business outcomes, not at the pilot level, but at the level of overall business performance?

If these questions feel difficult, inconsistent, or handled differently depending on which team you ask, the AI Operating Function in your organization is likely informal or undefined. That is common across organizations of all sizes. Most have not yet built this structure. But the absence of it is what keeps AI activity from becoming business impact, and in organizations where resources are constrained and the cost of misaligned investment is high, that absence carries a real price.

What You Can Do Now

Building an AI Operating Function does not require a complete organizational overhaul or a large team before you can start. The following steps allow you to begin assessing your current state, identifying where the structural gaps are, and taking the first practical actions to close them, regardless of whether you are a large enterprise or a mid-market organization working with limited resources.

- Take stock of your current AI portfolio. Map every active initiative across the organization: who owns it, what it is intended to achieve, how it is being funded, and how prioritization decisions are currently being made. If this exercise is harder than it should be, that itself is a signal about the state of your operating structure. For mid-market organizations, this inventory is often shorter than expected, and the coordination gaps tend to become immediately visible once everything is written down.

- Identify where promising pilots are stalling. For each initiative that has shown pilot-level success but has not scaled, ask honestly whether the blocker is technical or structural. Is the issue data readiness? System integration costs that were not in the original budget? Unclear ownership of the scaling work? In most cases, the blocker is structural, and no additional technology investment will resolve it.

- Assess your governance gaps. Look at where decision rights are unclear, where risk accountability is undefined, and where cross-functional coordination is breaking down. For organizations without a dedicated AI leadership function, these gaps are often significant. Addressing them does not require a large team. It requires clear decisions about who owns what.

- Ask whether AI is being managed as a capability or as a set of independent projects. If each initiative is being evaluated and managed in isolation, with no shared infrastructure, no common prioritization framework, and no organization-level performance view, your organization is managing AI as a series of experiments rather than as a business capability. That distinction is the starting point for building the structure that changes it.

- Consider whether you have the AI leadership your organization actually needs. For many mid-market organizations, the honest answer is that the AI Operating Function requires expertise and dedicated leadership that does not currently exist inside the organization. Acknowledging that gap is not a failure. It is the starting point for addressing it. Whether through a dedicated internal role, a fractional arrangement, or an advisory engagement, putting the right leadership structure in place is the precondition for everything else.

The Window Is Open. But It Won’t Stay Open Indefinitely.

The research is clear, and the pattern is consistent across industries and organization sizes. AI adoption is widespread. Business impact is not. The gap between the two is not a technology problem. It is a structural one. Organizations that close it do so not by finding better AI tools, but by building the management infrastructure required to direct, prioritize, govern, and scale AI as a coordinated business capability.

For large enterprises, this is about unlocking the value that significant AI investments are not yet delivering. For mid-market organizations, the stakes are higher and more immediate. Every dollar invested in AI without the right structure behind it is a dollar at risk. Every stalled pilot is a missed opportunity that a leaner organization cannot easily afford. And every month spent managing AI as a set of disconnected experiments is a month that competitors, whether larger, better resourced, or simply more structured, are pulling further ahead.

The organizations that build this structure now, at whatever scale is appropriate for where they are today, will scale AI faster, integrate it more deeply into their operations, and compound their advantage over time. The organizations that do not will continue to accumulate pilots, generate local wins, and wait for the business impact that never quite arrives.

If any of the questions in this article felt difficult to answer, or if the diagnostic in the previous section surfaced gaps you recognize, it may be time to have a conversation about what an AI Operating Function would look like in your organization. Whether you are a large enterprise trying to coordinate a sprawling AI portfolio, or a mid-market company making sure every AI dollar earns its return, the starting point is the same: the right structure, designed for where you are today.

We would welcome that conversation. Reach out to us at Strategy of Things.

References

[1] “The State of AI in 2025: Agents, Innovation, and Transformation.” McKinsey & Company / QuantumBlack. November 2025.

[2] “PwC’s 29th Global CEO Survey: Leading through uncertainty in the age of AI.” PwC. January 2026.

[3] Pandey, T. “Forrester picks holes in IT’s AI story, say just 10–15% pilots scale.” The Economic Times. January 22, 2026.

[4] Ryseff, J., De Bruhl, B., and Newberry, S. “The Root Causes of Failure for Artificial Intelligence Projects and How They Can Succeed.” RAND Corporation. August 2024.

[5] “The Widening AI Value Gap: Build for the Future 2025.” Boston Consulting Group. September 30, 2025.

This article is part of a continuing series aimed at providing senior leaders and managers with a practical working knowledge of artificial intelligence and how to manage it as a business capability.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

The AI Build–Buy–Partner Decision: A Strategic Framework for Executives

The key to successful AI projects: Start with the right problem

Your AI initiatives may be dead on arrival

Your AI Pilot Worked. So Why Isn’t It Scaling?